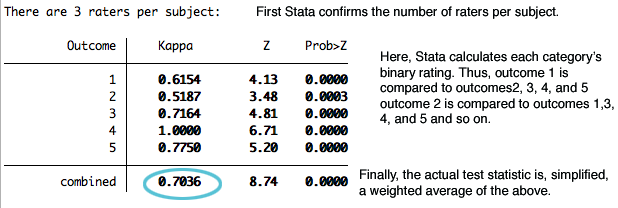

kappa interrater reliability for multiple raters, imparatorluk son kayış bakım fırça ebediyen cohen kappa for multiple raters - egyptianmagiceu.com - hadleysocimi.com

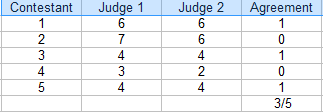

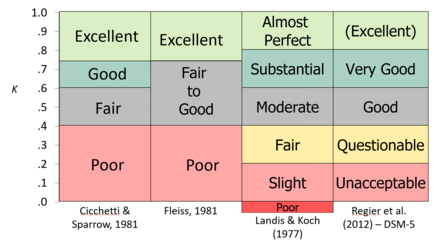

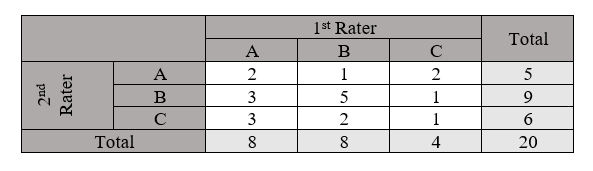

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

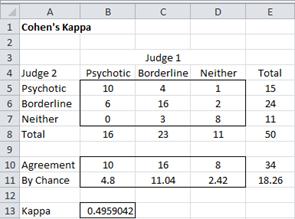

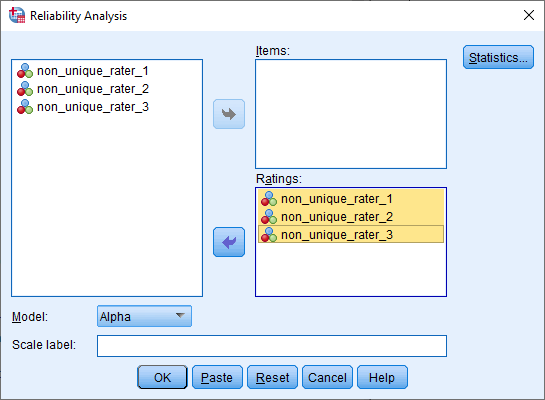

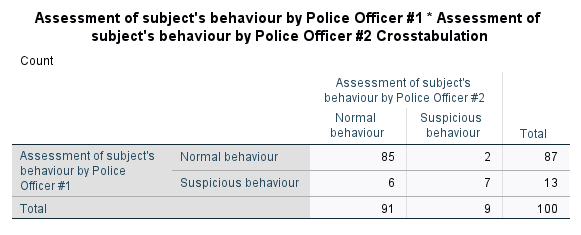

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

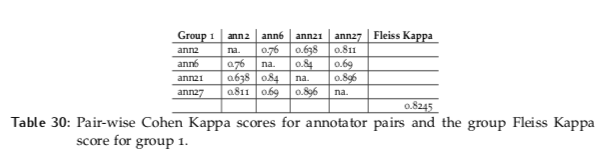

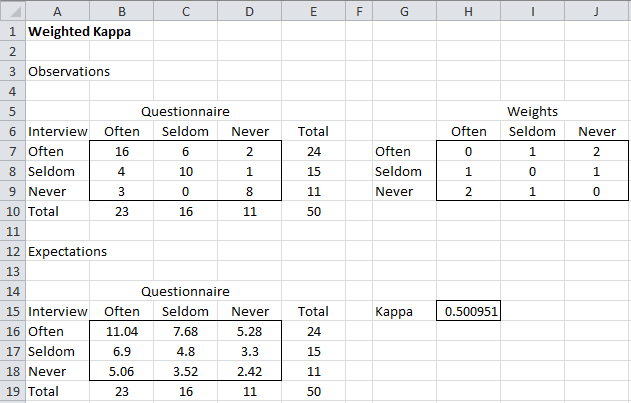

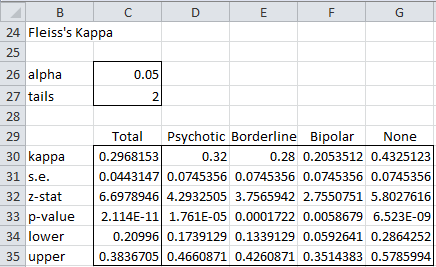

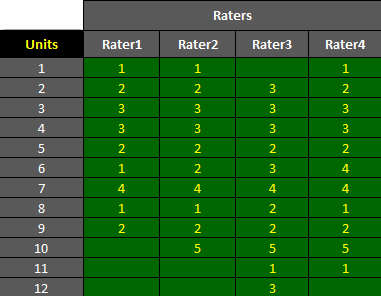

AgreeStat/360: computing weighted agreement coefficients (Conger's kappa, Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) for 3 raters or more